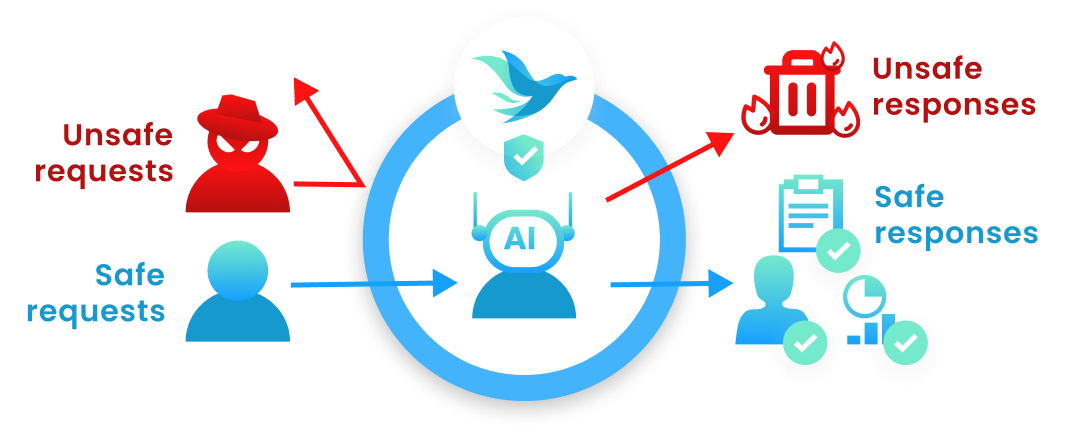

Guardrails

The FireGuard Guardrails are accessed via the FireGuard v1 API. This page documents creating a conversation, running input and output guardrails, and the Copilot conversation helper. To create a project from JSON settings, see Create Projects (API). All routes on this page use the base path https://api.fireraven.ai/public/fireguard/v1/.

You can find an example in this demo repository to see how to easily integrate FireGuard here

Before implementing the FireGuard Guardrails, configure policies and security guardrails (and optionally topics for in-product monitoring) on your project in the FireGuard app, here. Blocking behavior for the public API follows that project configuration: policies and security guardrails can allow or block messages. Topic relevance is available in the app for analytics and monitoring. The input_guardrails and output_guardrails responses include policies_guardrail_results and security_guardrail_results as documented on this page.

v1.1 URLsIf you still call https://api.fireraven.ai/public/fireguard/v1.1/..., the API responds with HTTP 307 redirecting to the matching /public/fireguard/v1/... path (same HTTP method). Prefer using v1 URLs directly in new integrations.

FireGuard v1 API

The FireGuard v1 API provides a unified architecture for guardrails with improved structure and flexibility.

Creating a conversation (v1)

POST https://api.fireraven.ai/public/fireguard/v1/conversation

Create a conversation for organizing and tracking your users' interactions with FireGuard.

Query parameter

project_id string (required)

The Project ID of your project. This can be found in your project settings under the General tab.

Body parameters

name string or null (optional)

The name of the conversation. Useful for organizing conversations.

Default: API Conversation {Date ISO8601}

description string or null (optional)

The description of the conversation.

Returns

Metadata of the created conversation.

id string

The unique identifier of the conversation.

name string

The name of the conversation.

description string or null

The description of the conversation.

created_at string

The ISO8601 date string of when the conversation was created.

is_client boolean

Indicates if this is a client conversation. Always true for API-created conversations.

Example

curl -X 'POST' \

'https://api.fireraven.ai/public/fireguard/v1/conversation?project_id=<your-projectId>' \

-H 'X-Api-Key: <your-apikey>' \

-H 'Content-Type: application/json' \

-d '{

"name": "My first v1 conversation",

"description": "A conversation created using the v1 API"

}'

{

"id": "00000000-0000-0000-0000-000000000000",

"name": "My first v1 conversation",

"description": "A conversation created using the v1 API",

"created_at": "1999-12-31T00:00:00.000Z",

"is_client": true

}

Creating or reusing a conversation (Microsoft Copilot)

POST https://api.fireraven.ai/public/fireguard/v1/conversation_copilot

Use this when integrating with Microsoft Copilot. It creates a new FireGuard conversation or reuses an existing one tied to the same Copilot conversation id, so messages and guardrails stay consistent across Copilot sessions.

Query parameters

project_id string (required)

The Project ID of your project (same as /conversation).

conversation_copilot_id string (required)

The identifier of the conversation in Copilot (your app should pass the id Copilot uses for that chat).

Body parameters

Same as Creating a conversation: optional name and description.

Returns

Same shape as Creating a conversation: id, name, description, created_at, is_client.

Example

curl -X 'POST' \

'https://api.fireraven.ai/public/fireguard/v1/conversation_copilot?project_id=<your-projectId>&conversation_copilot_id=<copilot-conversation-id>' \

-H 'X-Api-Key: <your-apikey>' \

-H 'Content-Type: application/json' \

-d '{

"name": "Copilot-linked conversation",

"description": "Optional"

}'

Input Guardrails (v1)

POST https://api.fireraven.ai/public/fireguard/v1/input_guardrails

Execute input guardrails. Which guardrails run (policies and/or security) is determined only by your project’s saved configuration in FireGuard: policies attached with block on input, and whether the security guardrail is enabled for input.

Query parameter

conversation_id string (required)

The unique identifier of the conversation. This can be obtained from the /public/fireguard/v1/conversation endpoint.

Body parameters

messages_history array (required)

The complete conversation history, used as context for analyzing the last message. The last message in the array is the one being evaluated.

{

"role": "string", // Role of the message. Value is `user`, `system`, or `assistant`

"content": "string" // Content of the message

}

guardrails array (optional)

Optional. If present, each item must be { "type": "policies_guardrail" } and/or { "type": "security_guardrail" }. Which guardrails run is determined from your project settings. You can omit guardrails entirely.

images array (optional)

Optional. Up to 6 images associated with the current user turn (the last message in messages_history). They are included in policies guardrail evaluation when your project runs input policies. Each item:

base64string (required) — Base64-encoded image bytes only (do not include adata:image/...;base64,prefix).mimeTypestring (required) — Image MIME type, in the formimage/...(for exampleimage/pngorimage/jpeg).namestring (optional) — A label or original file name.

Each base64 string is limited to about 7 MB of characters. The security guardrail still runs on text derived from messages_history only.

Returns

The result of the input guardrail analysis.

is_safe boolean

Overall result: true only if every executed guardrail that applies reports safe (policies and security, when enabled).

input_request object

The input message that was processed, with its persisted ID. This object contains text only for the evaluated turn (the last message in messages_history). Image bytes are not returned here and are not nested under content.

How to pass images: send them in the same JSON body as top-level images (alongside messages_history), as documented in the images section above. Do not embed base64 or MIME data inside messages_history[].content—the API expects plain string content there and uses images for multimodal policy checks.

{

"id": "string", // Unique identifier of the persisted message

"role": "string", // Role: "user" or "assistant"

"content": {

"text": "string", // Original message text (same turn as evaluated)

"text_with_context": "string" // Message text with context

}

}

policies_guardrail_results object (optional)

Present when the policies guardrail ran and returned at least one policy in the policies array. If there are no policy rows, this key may be omitted.

Each policy in policies may include policy_violation_message when a violation message applies to that policy.

{

"policies": [

{

"id": "string",

"name": "string",

"description": "string",

"criticality": "string", // "low", "medium", "high", or "critical"

"detection_threshold": 0.1,

"detection_is_above_threshold": true,

"is_default": false,

"is_archived": false,

"created_at": "1999-12-31T00:00:00.000Z",

"updated_at": "1999-12-31T00:00:00.000Z",

"archived_at": null,

"status": "string", // "success", "error", or "warning"

"value": 0.9546,

"is_safe": true,

"policy_violation_message": null

}

],

"is_safe": true,

"timestamp": "1999-12-31T00:00:00.000Z"

}

security_guardrail_results object (optional)

Present when the security guardrail is enabled for input on the project and was executed.

{

"is_safe": true,

"timestamp": "1999-12-31T00:00:00.000Z",

"value": 0.12,

"security_violation_message": null

}

When the message is blocked by security, is_safe is false and security_violation_message may contain the message shown to the user (per project configuration).

Example (text only)

curl -X 'POST' \

'https://api.fireraven.ai/public/fireguard/v1/input_guardrails?conversation_id=<your-conversationId>' \

-H 'X-Api-Key: <your-apikey>' \

-H 'Content-Type: application/json' \

-d '{

"messages_history": [

{

"role": "system",

"content": "You are a helpful assistant."

},

{

"role": "user",

"content": "What is the weather today?"

}

]

}'

{

"is_safe": true,

"input_request": {

"id": "00000000-0000-0000-0000-000000000000",

"role": "user",

"content": {

"text": "What is the weather today?",

"text_with_context": "What is the weather today?"

}

},

"policies_guardrail_results": {

"policies": [

{

"id": "00000000-0000-4000-0000-000000000000",

"name": "Example policy",

"description": "Example description",

"criticality": "medium",

"detection_threshold": 0.1,

"detection_is_above_threshold": false,

"is_default": false,

"is_archived": false,

"created_at": "1999-12-31T00:00:00.000Z",

"updated_at": "1999-12-31T00:00:00.000Z",

"archived_at": null,

"status": "success",

"value": 0.05,

"is_safe": true,

"policy_violation_message": null

}

],

"is_safe": true,

"timestamp": "1999-12-31T00:00:00.000Z"

},

"security_guardrail_results": {

"is_safe": true,

"timestamp": "1999-12-31T00:00:00.000Z",

"value": 0.08,

"security_violation_message": null

}

}

Example (text and images)

Replace <base64-png-without-data-url-prefix> with the raw base64 payload of your image (for example from base64 -w0 file.png on Linux).

curl -X 'POST' \

'https://api.fireraven.ai/public/fireguard/v1/input_guardrails?conversation_id=<your-conversationId>' \

-H 'X-Api-Key: <your-apikey>' \

-H 'Content-Type: application/json' \

-d '{

"messages_history": [

{

"role": "system",

"content": "You are a helpful assistant."

},

{

"role": "user",

"content": "Does this image comply with our content policy?"

}

],

"images": [

{

"base64": "<base64-png-without-data-url-prefix>",

"mimeType": "image/png",

"name": "screenshot.png"

}

]

}'

Output Guardrails (v1)

POST https://api.fireraven.ai/public/fireguard/v1/output_guardrails

Execute output guardrails on assistant text. Which guardrails run is determined only by your project’s saved configuration: policies with block on output, and whether the security guardrail is enabled for output.

Query parameter

conversation_id string (required)

The unique identifier of the conversation where the input message was stored.

Body parameters

input_id string (required)

The unique identifier of the input message. This ID is returned from the input_guardrails endpoint in the input_request.id field.

output string (required)

The text output from your LLM that needs to be validated.

guardrails array (optional)

Optional. Allowed types in the schema are policies_guardrail and security_guardrail. Which guardrails run follows your project settings.

Returns

The result of the output guardrail analysis.

is_safe boolean

Overall result when policies and/or security guardrails apply.

output_request object

The output message that was processed, with its persisted ID.

{

"id": "string", // Unique identifier of the persisted message

"role": "string", // Role: "user" or "assistant"

"content": {

"text": "string" // Output message text

}

}

output_message_id string or null

Identifier of the persisted output message (for monitoring and linking).

policies_guardrail_results object (optional)

Same structure as the policies guardrail results on the input endpoint (see above). Omitted if there are no policy rows.

security_guardrail_results object (optional)

Same structure as the security guardrail results on the input endpoint (see above), when security output guardrail is enabled and executed.

Example

curl -X 'POST' \

'https://api.fireraven.ai/public/fireguard/v1/output_guardrails?conversation_id=<your-conversationId>' \

-H 'X-Api-Key: <your-apikey>' \

-H 'Content-Type: application/json' \

-d '{

"input_id": "00000000-0000-0000-0000-000000000000",

"output": "The weather forecast is 24°C with a mix of sun and cloud for the day."

}'

{

"is_safe": true,

"output_request": {

"id": "00000000-0000-0000-0000-000000000000",

"role": "assistant",

"content": {

"text": "The weather forecast is 24°C with a mix of sun and cloud for the day."

}

},

"output_message_id": "00000000-0000-0000-0000-000000000001",

"policies_guardrail_results": {

"policies": [

{

"id": "00000000-0000-4000-0000-000000000000",

"name": "Financial advice",

"description": "The text contains financial advice.",

"criticality": "critical",

"detection_threshold": 0.1,

"detection_is_above_threshold": false,

"is_default": false,

"is_archived": false,

"created_at": "1999-12-31T00:00:00.000Z",

"updated_at": "1999-12-31T00:00:00.000Z",

"archived_at": null,

"status": "success",

"value": 0.05,

"is_safe": true,

"policy_violation_message": null

}

],

"is_safe": true,

"timestamp": "1999-12-31T00:00:00.000Z"

},

"security_guardrail_results": {

"is_safe": true,

"timestamp": "1999-12-31T00:00:00.000Z",

"value": 0.03,

"security_violation_message": null

}

}